Dev Diary: Building a Canny clone in production with Claude

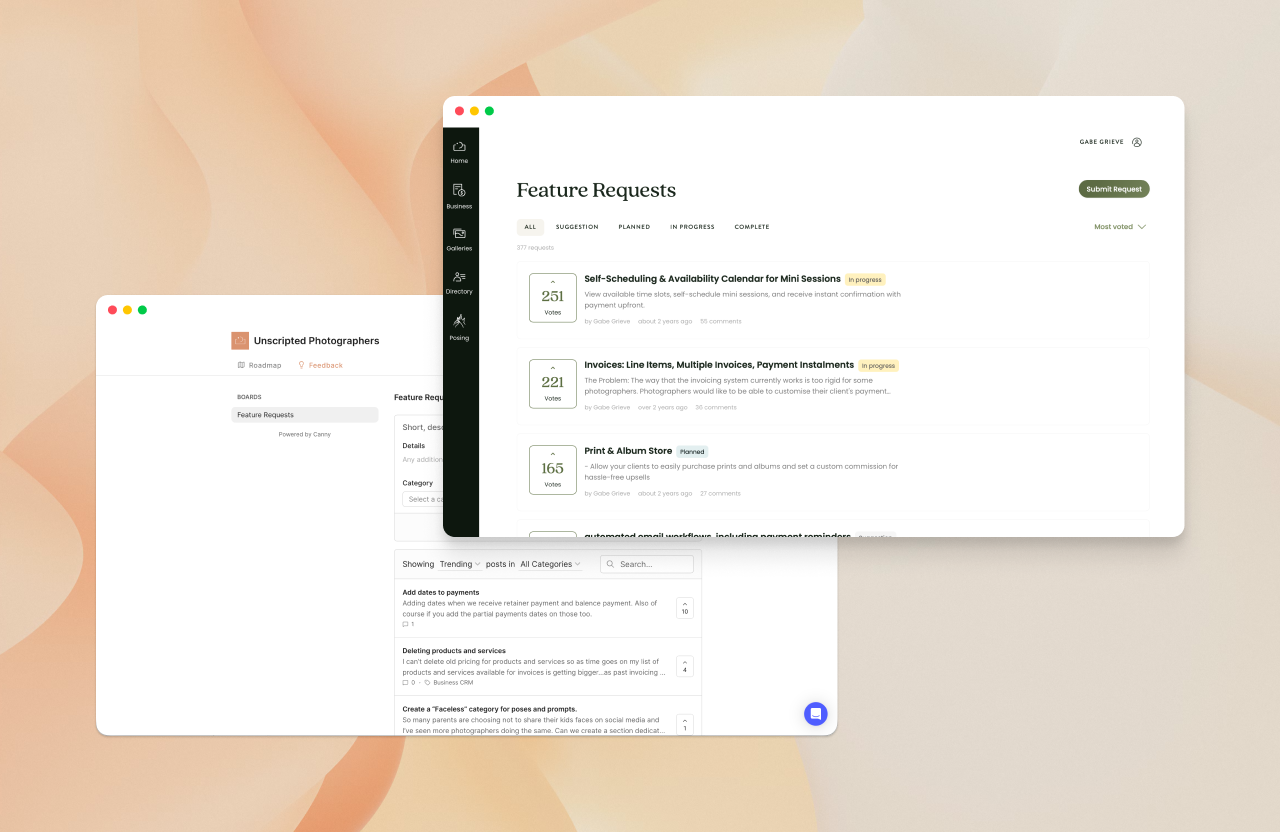

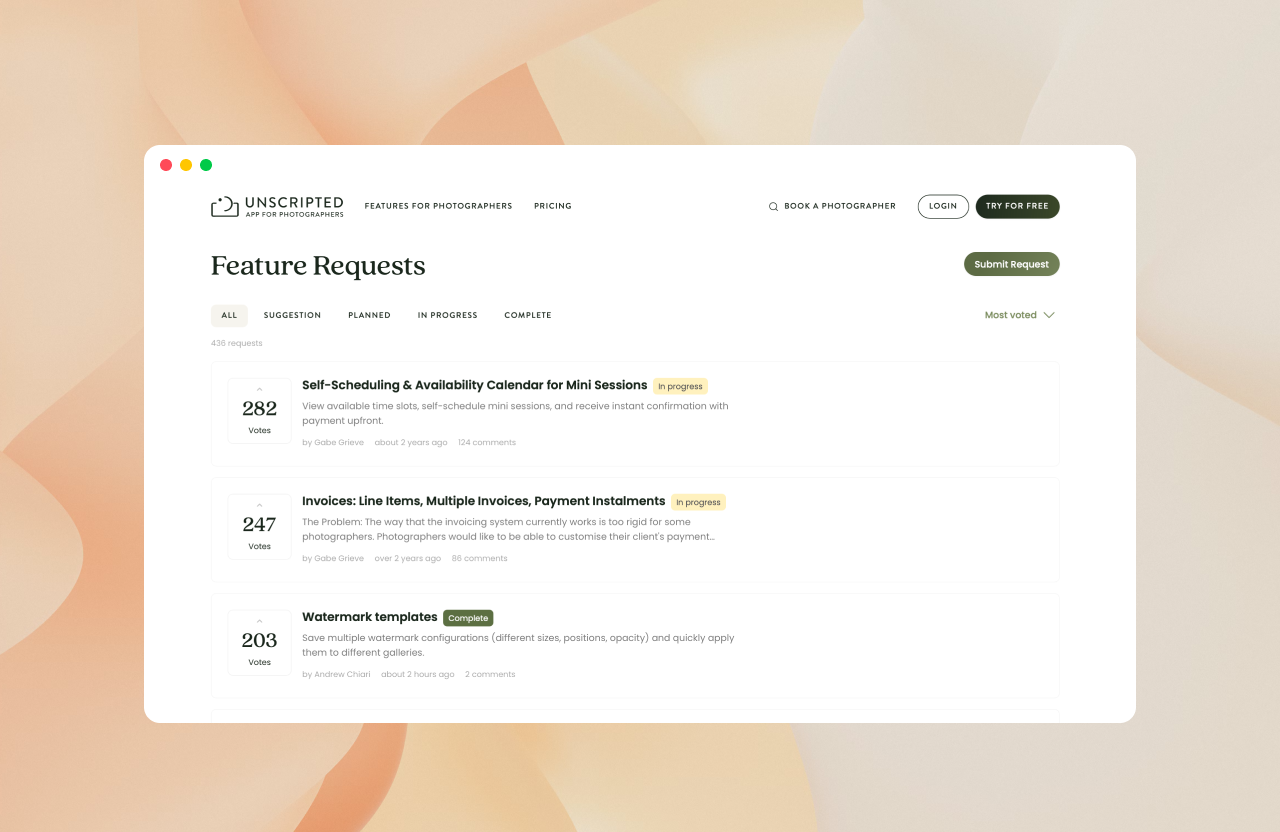

Two years ago, I set up Canny at Unscripted Photographers. It quickly became indispensable — collecting feature requests, engaging users, feeding our public roadmap, and keeping our customers updated when we shipped new features.

It also doubled as a pipeline for recruiting user testers. The customers who were regularly submitting and voting on ideas were frequently happy to jump on a prototype test or interview.

We got two years of immense value from Canny, all the while on the free plan. But at the start of 2026, Canny introduced new pricing which effectively eliminated our existing plan.

The $0 → $360/mo wake-up call

When the emails started trickling in to let us know that our beloved free plan was not long for this world, I was certainly prepared to consider an upgrade to a paid plan. However when the prices were published, it became a much more difficult decision.

With 899 tracked users, our options were:

Pay $360 AUD/month (their lowest tier, scaling up with every 100 users)

Stay free by culling 874 users — destroying two years of accumulated requests, votes, and context

Canny was clearly betting that enough customers would absorb the cost to avoid the pain of switching. But for a relatively budget-conscious start up, we're forced to think carefully about things like this. Does the value here really exceed $4,300 AUD per year?

I distilled the value down to three problems Canny was solving:

Customers can't see what we're working on

Customers can't surface or rally behind popular requests

Consolidating feedback across channels is a time sink

Canny's public roadmap, vote merging system, and status tracking solved these problems quite elegantly for us. But in an age where designing and building product is easier than ever, is this something we need to pay a subscription for? Or can we deliver something like this ourselves for less than the cost of an annual subscription to Canny?

The buy vs. build math

Two years ago, building this in-house would have looked something like:

Role | Time | Cost (AUD) |

|---|---|---|

Product designer | 2–3 days | $1,500–$2,250 |

Engineering pair | 5–8 days | $3,850–$6,160 |

Product manager | 1–2 days | $760–$1,520 |

Because it's almost impossible to be accurate with time estimates when it comes to software delivery, we can pretty safely assume that the upper cost represented here (~$10K) is more likely to be the floor than the ceiling.

That's over double the cost of an annual Canny subscription. That says nothing of the opportunity cost that is lost when we dedicate a sprint cycle for 3-4 people to deliver something that doesn't have a meaningful, immediate impact on our core product.

In way, this is the core value proposition of a lot of SaaS businesses – we built it so you don't have to. And the decision framework is straightforward:

### Buy if:

Buy if: value + build_cost > (subscription_cost × time_horizon) + integration_cost

### Where:

value = total estimated value of the service

build_cost = cost to build in-house

subscription_cost = annual subscription cost

integration_cost = cost to integrate

time_horizon = years you're evaluating overWhen Canny was free, this was a no-brainer.

But a few things have changed pretty dramatically in the last two years – not just Canny's pricing model. There is no doubt in my mind that AI-driven development has massively reduced the time investment required to build product. While it is still difficult and risky to use AI development tools like Claude Code on sensitive parts of our codebase, it excels at delivering small solutions to well-scoped problems.

So could I build a rudimentary Canny clone with Claude, and deploy it to our production app in a time frame that tips the value proposition in favour of building it in-house? Could I do it in as little as 8 hours?

### Buy if:

value + build_cost > (subscription_cost × time_horizon) + integration_cost

### Where:

// build_cost calculated as:

// 1 day of my time ($650)

// + 2 hours of a senior dev to review code ($265)

// + claude subscription ($6 per week)

value = ???

build_cost = 921

integration_cost = 0

subscription_cost = 4,360

time_horizon = 3

### Scenario A (Paid tier, 3 yrs)

Buy if: V + 921 > 13,080

→ Worth it if V >= 12,159With a build cost like that, is the convenience of staying with Canny worth more to us than $12,159 over three years? That's around 20 extra subscriptions per year at Unscripted. Is the Canny UX and feature set encouraging 20 people per year to sign up? Is it saving more than 20 people a year from churning? That's a much harder problem to figure out, but my gut says no.

But is it realistic to think that I can build a good-enough solution to our original problems in less than a day?

The Build ☕️

The project broke down into three milestones:

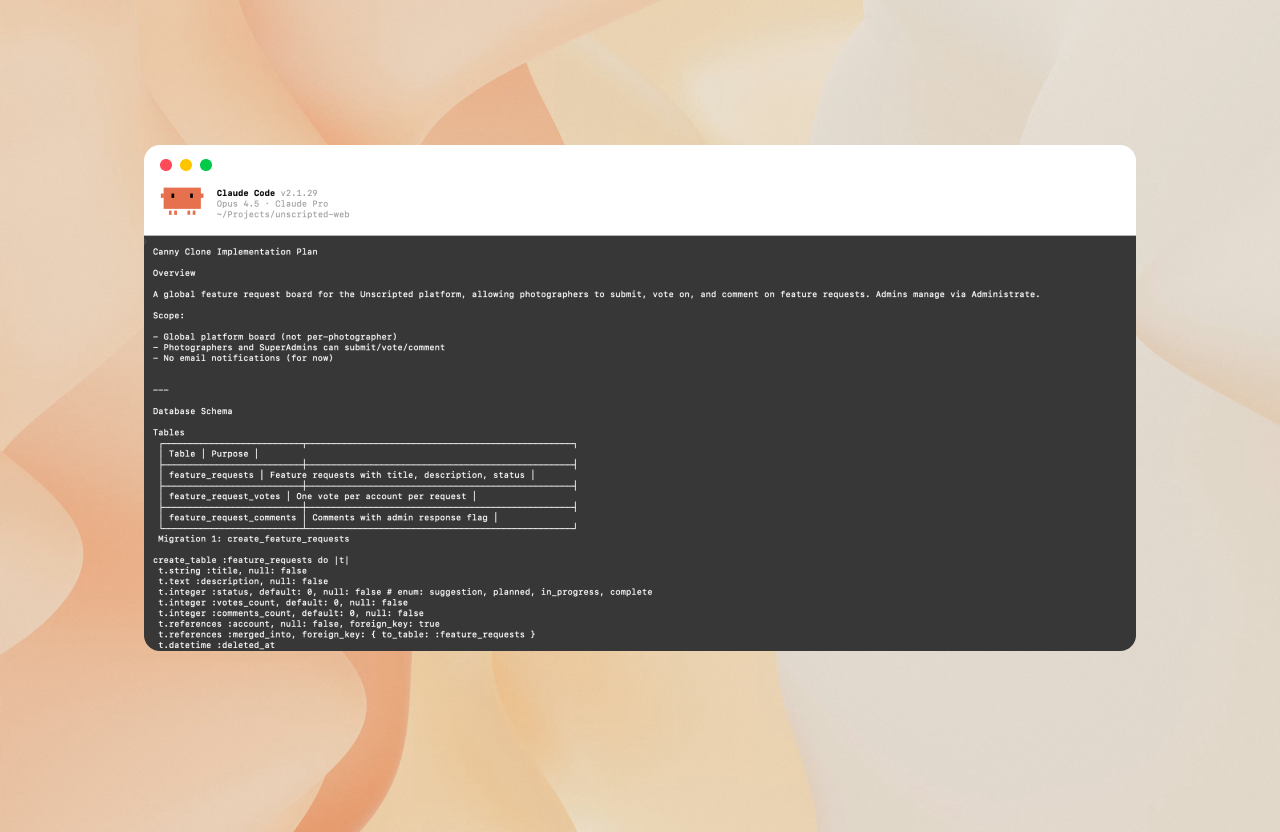

1. Data modelling and core functionality

Our platform runs on Ruby on Rails, and one of the underrated benefits of an opinionated framework is that AI is exceptionally good at following its conventions. Generating models, migrations, routes, controllers, and views for our new feature_request, feature_request_vote, feature_request_comment, and feature_request_event tables was close to trivial.

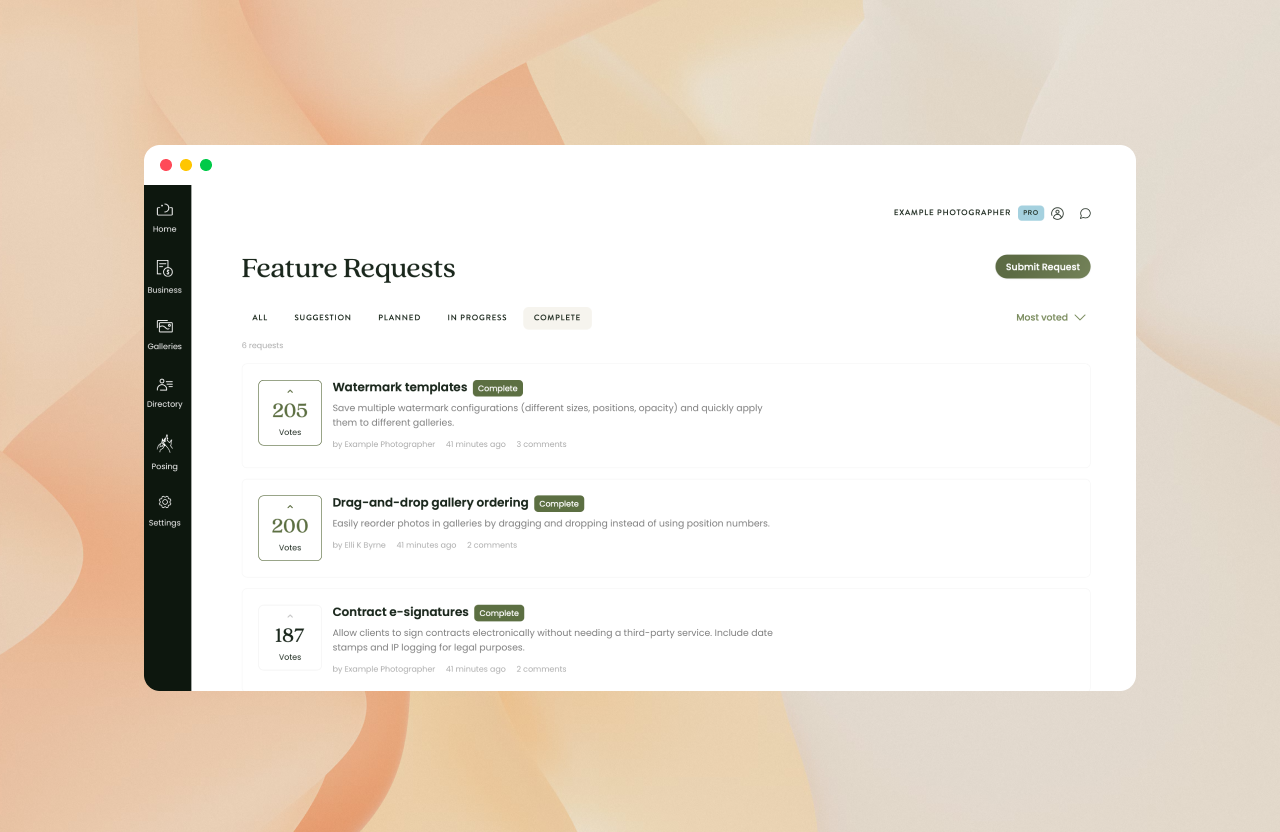

Claude's first pass at the frontend wasn't bad, but it invented new UI patterns instead of using our design system. After doing some fine tuning by hand to layer in our component library, icon set, and design tokens, the output snapped into shape quickly.

The core user stories were deliberately minimal:

See feature requests from other photographers

Create, vote on, and comment on requests

See when a request has been shipped

Canny does a lot more than this, but for our purposes – this is more than enough.

2. Admin tooling

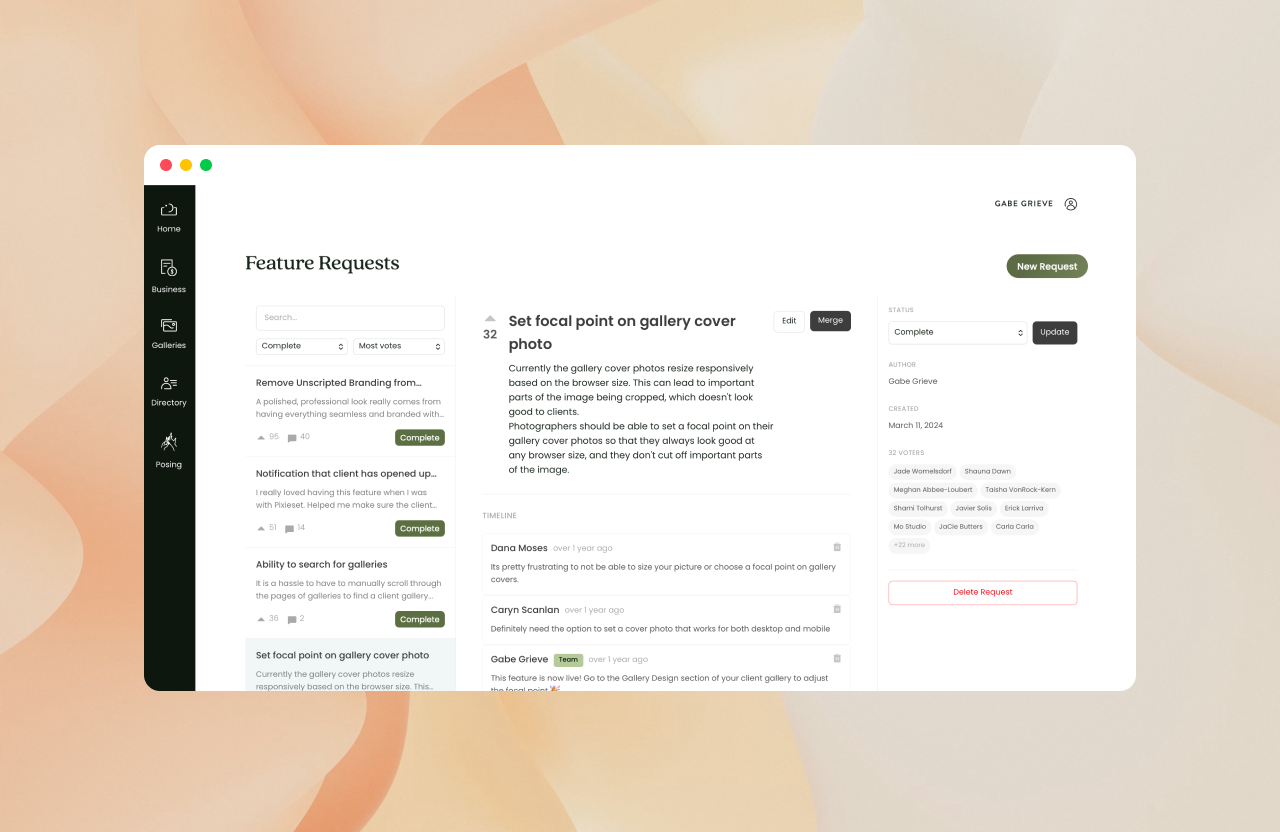

This is where things started to go a little pear-shaped. The initial plan was to allow the management of feature requests and comments via the administrate dashboard. Administrate is a popular ruby gem that provides a rudimentary dashboard for authenticated accounts to manipulate records in the database.

I implemented this, but very quickly it was clear that the UX of using administrate was really clunky compared to the admin panel in Canny. Administrate is great for simple CRUD, but it falls apart when you need to view intertwined records in context — moderating comments, merging duplicate requests, and updating statuses all in one flow.

So I scrapped that approach and used Claude to build a custom three-panel admin dashboard from scratch. This was a significant step up in complexity: lots of state management, turbo frames and turbo streams for reactivity, and a UX that needed to feel familiar to our support team who were used to the Canny dashboard.

Where Claude had basically one-shotted the first milestone, this stage required substantial debugging, scope revision, and multiple rounds of review. The PR came in at 1,300+ lines — right at the edge of what I was comfortable shipping from AI-generated code.

We justified it because it was internal tooling, and both Copilot and our senior engineer reviewed it thoroughly, but there is a real feeling of discomfort shipping a large chunk of work where my personal contribution was limited to high level prompting and reviewing.

Migrating two years of Canny data

Canny had locked us out of the admin panel, but their API was still accessible. The migration strategy was two-phased:

Phase 1: Export. Pull all posts, votes, and comments from the Canny API into temporary JSON files. Decoupling the export from the import gave us the flexibility to retry without hitting their API repeatedly.

Phase 2: Import. Match Canny users to our database via email, user ID, or business email, then hydrate our new tables.

Claude's initial import approach tried to hold Canny post IDs in memory during the script run — not idempotent, no audit trail, and a partial failure would require a complete restart. We added a canny_post_id column to feature_requests instead. A small, temporary addition that made the migration dramatically more robust.

After testing locally, we ran the import in our staging environment. We frequently take snapshots of our production database to use on staging, so it should have given us an accurate read on how many records would fail to match.

Importing posts.

Imported 38 posts (367 skipped - no matching account)

Importing votes...

Imported 37 votes (7375 skipped)

Importing comments...

Imported 18 comments (644 skipped)

Processing merges...

Created 71 merge events (92 skipped)Ouch. Initially, I thought that the account look up functionality in the import script was broken. But it turned out that our staging database was actually very dated, and had missed a key rake task on a table we were relying on for our import. After fixing that and adding fallback matching on user IDs and business emails, production told a much better story:

Importing posts...

Imported 379 posts (27 skipped - no matching account)

Importing votes...

Imported 6049 votes (1359 skipped)

Importing comments...

Imported 517 comments (145 skipped)

Processing merges...

Created 159 merge events (4 skipped)

Importing status change events...

Imported 48 status change events (14 skipped)

Import complete!

Feature requests: 379

Merged source posts: 159

Votes: 6049

Comments: 517

Status change events: 48

Merge events: 159Pretty good. We got about 93% of our feature requests over in to the production database. There were a fair few users who we couldn't match accounts for in our database – especially voters and commenters – but I can live with that.

The final score

Total time: ~14 hours for me, ~2 hours for our senior engineer. Not far off the original estimate, and a fraction of the ~92 hours it would have taken a small team to build without AI assistance.

From there it was a demo with the support team, rewiring the public roadmap to read from our database instead of Canny's API, and swapping out the Intercom integration for a simple link to the new page.

With that, we'd fully deintegrated — saving roughly $15K AUD + over three years.

There are gaps: email notifications, admin alerts for new requests, and a few polish items. But the core problems are solved, and we have gained the additional benefit of ensuring that all future requests, votes and comments can be attributed directly to a user in our database.

What I took away from this

There are a few takeaways from me now that I'm at the end of this process.

Senior engineers are still essential for sanity checking

There were times when my confidence in what claude was suggesting was nowhere near as high as it needed to be to feel comfortable committing changes to prod. Having a senior engineer watching my back and double checking the work was a critical part of this process.

Using AI to review PRs works pretty well, but it can also pretty easily end up going around and around in circles. A strong test suite, experienced engineers, and a proper staging environment caught several significant mistakes in Claude's code.

Companies that train their designers to be able to do this kind of work will excel.

Part of me reflects on the ease with which I was able to do this and wants to scream in frustration at the vast amount of time I spent learning software development. While that foundation gave me the confidence and contextual awareness to be able to deliver on this project, I don't think I will have that advantage for very long.

The best software companies are already building out risk-free environments in which non-engineers can build production ready software that might actually make it to the main branch. Design roles will go through another evolution that positions them as both idea shapers and builders. I believe that the startups that can capitalise on this will be the most competitive.

It's a scary time to run a SaaS company without a pretty strong technical moat.

There is certainly a level of guilt I feel for effectively stealing Canny's excellent, well-researched product design and implementing it directly in to our product. I think what they've built is genuinely great. While I'm obviously biased towards the fact that we got it for free for so long, I'd like to believe that I might have paid for it no question if they got the pricing right for our company.

Software companies have been stealing each other's ideas for as long as software has been a thing. But the ease with which you can now replace a simple, yet expensive integration with something you built internally, makes it very difficult to justify paying for all but the most sophisticated SaaS products.

Most of our subscription budget is soaked up by genuinely excellent and technically sophisticated products. Intercom comes to mind – without it we would be lost, and the idea of trying to build a replacement ourselves is ludicrous. But I'm certainly looking sideways at some of our less sophisticated services.

The Claude subscription irony

The other point of tension here is Claude itself. Claude's value proposition right now is laughable compared to the manual alternative. But what happens if that subscription costs increases 10x? 100x? Franky, it's probably still worth our company paying for — and that's a bit of a scary thought.

When anyone can build anything, discipline matters more

It is tempting to look at all of these feature requests and imagine a world where we smash out the top 50 request within the next 6-12 months. But would that mean our product would be 50x better? Or make 50x the amount of money? Obviously not — it would make it a bloated, poorly considered mess. When building is no longer the bottleneck, the traditional design responsibilities of idea validation and solution consolidation become more critical than ever.

This was a small project, but it crystallised something I've been feeling for a while. The calculus of build vs. buy is shifting fast, and it's not done shifting. If you're running a product team at a startup, it's worth revisiting your SaaS stack with fresh eyes. Some of those line items might be worth a weekend.